Cade Roux

I have a question about this fiddle:

<>https://dbfiddle.uk?rdbms=sqlserver_2019&fiddle=0cf05f882eb24f53e9484f043af99446

I was having trouble with some XML going out by default in scientific notation which, while not incorrect or inaccurate, isn't terribly readable.

I was originally using FORMAT(floatcol, 'G17') because of the comments on [this documentation page](https://docs.microsoft.com/en-us/dotnet/standard/base-types/standard-numeric-format-strings#the-general-g-format-specifier) that:

> Note that, when used with a Double value, the "G17" format specifier ensures that the original Double value successfully round-trips. This is because Double is an IEEE 754-2008-compliant double-precision (binary64) floating point number that gives up to 17 significant digits of precision. We recommend its use instead of the "R" format specifier, since in some cases "R" fails to successfully round-trip double-precision floating point values. The following example illustrates one such case.

Well, today I found that it seems to add some extra insignificant digits to the string. In this particular example, they all "round trip" fine, but the G17 format has an extra insignificant digit.

And despite the fact that it might not affect me on a round-trip, I really don't want to be sending this to another party with extra digits.

Right now I am leaning towards changing to FORMAT('G'), but am not sure of the implications of that. Currently the format string used in these XML exports is a configuration setting stored in my system, so it's easiest to continue to use FORMAT since it requires no code changes.

So before I go testing on a variety of other values, my question is what is the difference between G and G17 overall and what problems might I run into using G instead of G17.

Top Answer

Paul White

`FORMAT` is implemented in CLR, but the overall question can be answered in T-SQL terms.

To **guarantee** lossless conversion, 17 digits are needed.

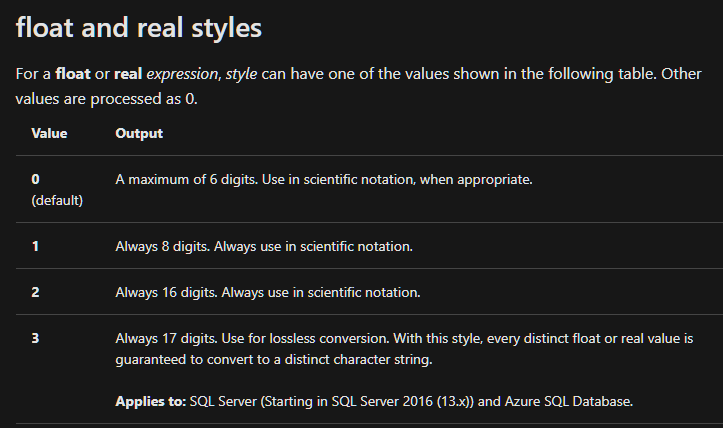

This is [mentioned in the documentation](https://docs.microsoft.com/en-us/sql/t-sql/functions/cast-and-convert-transact-sql#float-and-real-styles) for `CAST` and `CONVERT`:

Using `G17` is equivalent to `CONVERT(type, value, 3)`.

If you use `CAST`, style zero is applied by default (6 digits).

If you use SQL Server 2014 or earlier, style 3 is not available.

<>https://dbfiddle.uk/?rdbms=sqlserver_2019&fiddle=b976ca181d15116655200fa81090244d